08: Outliers and Leverage

IMS, Ch. 7

Smith College

Feb 13, 2026

Recap

Sums of squares decomposition

\[ y_i - \bar{y} = y_i + (\hat{y}_i - \hat{y}_i) - \bar{y} \]

Rearrange terms:

\[ \underbrace{y_i - \bar{y}}_{\text{null residual}} = (\underbrace{y_i - \hat{y}_i}_{\text{model residual}}) + (\underbrace{\hat{y}_i - \bar{y}}_{\text{model improvement}}) \]

It can be shown that (not obvious):

\[ \underbrace{\sum_{i} (y_i - \bar{y})^2}_{SST} = \underbrace{\sum_{i} (y_i - \hat{y}_i)^2}_{SSE} + \underbrace{\sum_{i} (\hat{y}_i - \bar{y})^2}_{SSM} \]

Warmup

Body dimensions

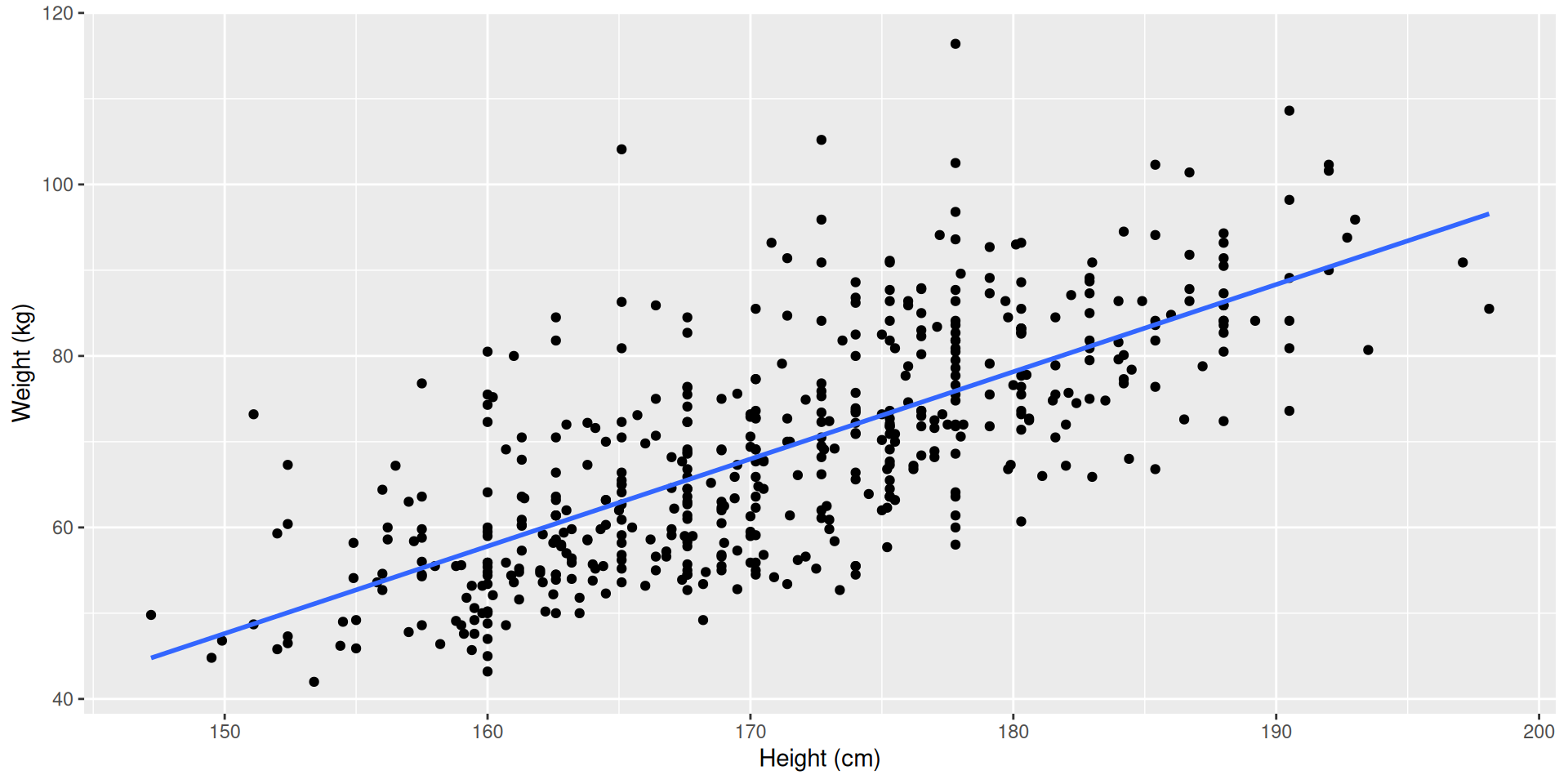

Consider the relationship between weight (kilograms) and height (centimeters) of 507 physically active individuals.

Body dimensions

Your turn: Body dimensions

- Describe the relationship between

heightandweight. - Write the equation of the regression line

- Interpret the slope and intercept in context

- The correlation coefficient for height and weight is 0.72. Calculate \(R^2\) and interpret it in context.

Outliers, Leverage, and Influence

Outliers

- outlier: an observation that doesn’t seem to fit the general pattern of the data

- An observation with an extreme value of the explanatory variable is a point of high leverage

- A high leverage point that exerts disproportionate influence on the slope of the regression line is an influential point

- important to identify

- must understand role in determining the regression line

- don’t just throw them out without a good reason!

True or False?

- High leverage points always change the intercept of the regression line

- High leverage points are always close to \(\bar{x}\)

- It is much more likely for a low leverage point to be influential, than a high leverage point

Regression with categorical variable

One Categorical Explanatory Variable

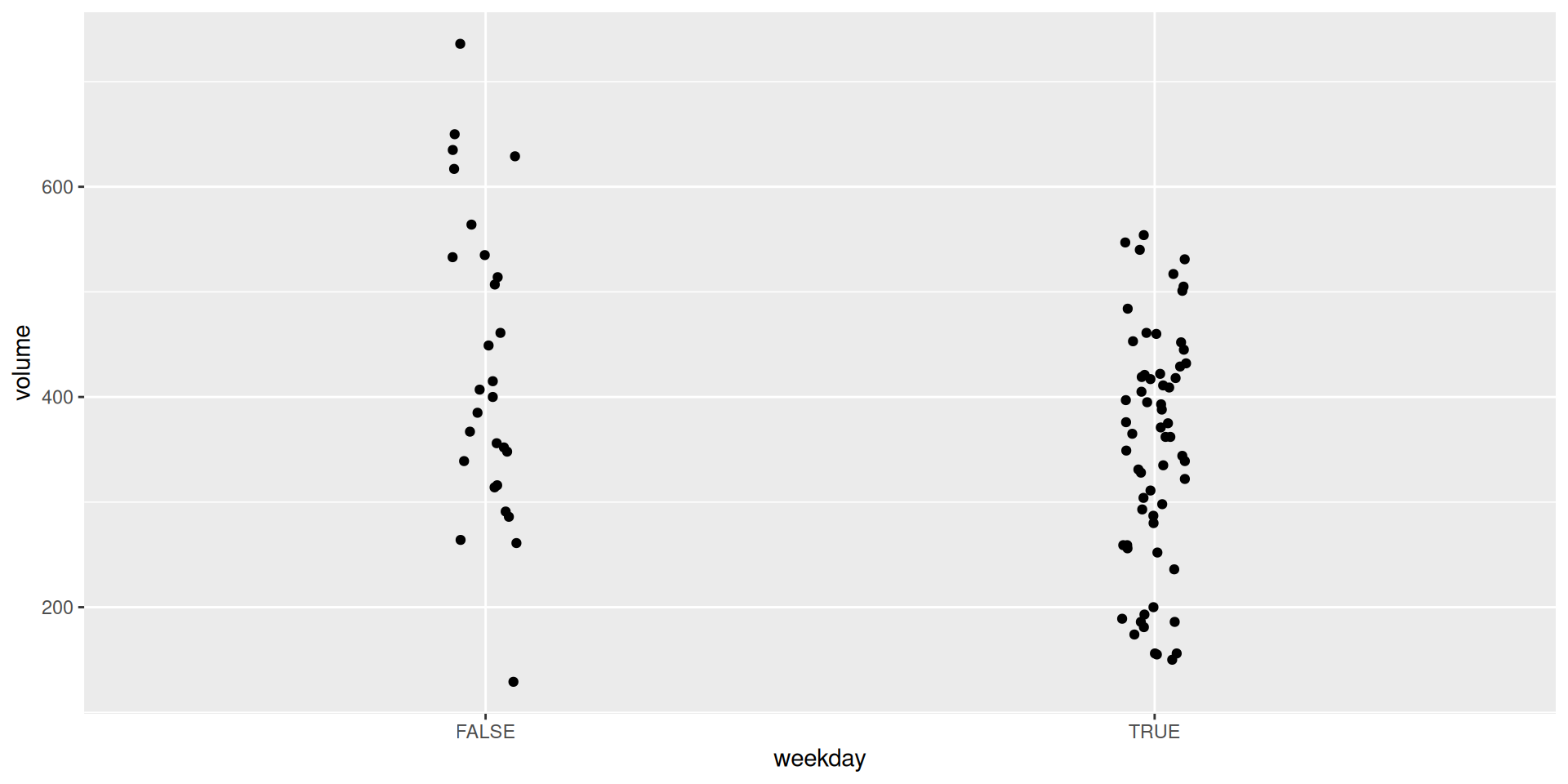

Recall the RailTrail example

- \(weekday\) is binary and takes on the values 0 and 1

- [Such variables are often called indicator variables (by mathematicians) or dummy variables (by economists).]

- A model using

weekdayhas the form:

\[ \widehat{volume} = \hat{\beta}_0 + \hat{\beta}_1 \cdot weekday \]

RailTrail with weekday

Your turn: RailTrail predictions

- How many riders does the model expect will visit the RailTrail on a weekday?

- What about a weekend?

- What if it’s 80 degrees out?

- How would you draw this model on the scatterplot?

- Estimate the \(R^2\) for this model. Is it greater or less than the \(R^2\) for the model with temperature as an explanatory variable?